I’m coming back to America for the summer and thought I’d offer some tips to fix up the place before I get there. There’s so much winning left in America if it would just listen to some simple advice.

1 – Back the money by Gold.

Dude, you live in a Ponzi scheme. You work in a Ponzi scheme. They didn’t invent some new brilliant thing in 1971 when they backed the money by nothing, they rugged you. They mortgaged the future to pay for the past. I love how crypto taught a generation, including me, how money works.

The US dollar circa 2026 is a shitcoin, like ripple and chainlink. It’s fake and made up by some dudes. And they are pulling the rug. Slowly, but it’s being pulled. Regardless of what we do, it will go the way of all fiat currencies in history and be gone. Worthless. 0. Kaput. Nada. We need to face this reality ASAP and move the money back to something that can’t move the denominator just cause some dude said so.

The best time to do this was 50 years ago. The second best time is now. Every reason for not doing this is cope from people who want the economy to stay rigged.

2 – End all entitlement programs.

Are you kidding me with all this crap? You get what you incentivize. You give people money for not having a job, boom, no job. You give people healthcare for being poor, boom, poverty. You give farmers money for growing corn, boom, corn syrup in everything. You give old people money for being old, boom, boomers never die and hoard all the wealth. And then every other group just whines and says they want entitlements too.

The government should never ever hand out money to anyone. Not poor people, not old people, and not corporations. This creates a society of beggars and lobbyists. You get what you incentivize.

3 – Acknowledge all men are not literally created equal.

I’m a Christian, and so were most of the founders of America. I’m not a fundamentalist. I don’t believe the Earth was created 6,000 years ago, Mary was probably raped by a Roman soldier¹, and Jesus had a kick ass time at the last supper planning the whole came back to life thing with his boys and his girlfriend.

This doesn’t change the moral value of Christianity. Whether the stories are word for word true or not isn’t the point. I believe Jesus was the son of God and He died for my sins. The mechanics of how and all the little details? That’s just what God needed to do to make the universe appear self consistent to us. Believing despite the lack of observable miracles is the point of having faith.

Unlike fundamentalist wokists, the founders didn’t literally believe all men were created equal. Height is heritable, skin color is heritable, and IQ is heritable. This doesn’t change the brilliance of the constitution. Morally, all men are equal. Under the law, all men are equal. And under the constitution, all men are equal.

It’s not literal. Racial group differences are mostly not caused by racism. Gender differences are mostly not caused by sexism. You think Kenyans run fast cause of racism? You think women are short cause of sexism? That’s how stupid wokists are.

The moral principle of equality is solid, and people who believe in blank slatism do a serious disservice to civil society. By not acknowledging what is blatantly true they undermine the moral principle, which is an ought and would be a really sad thing to lose.

4 – Massive scale high skill immigration.

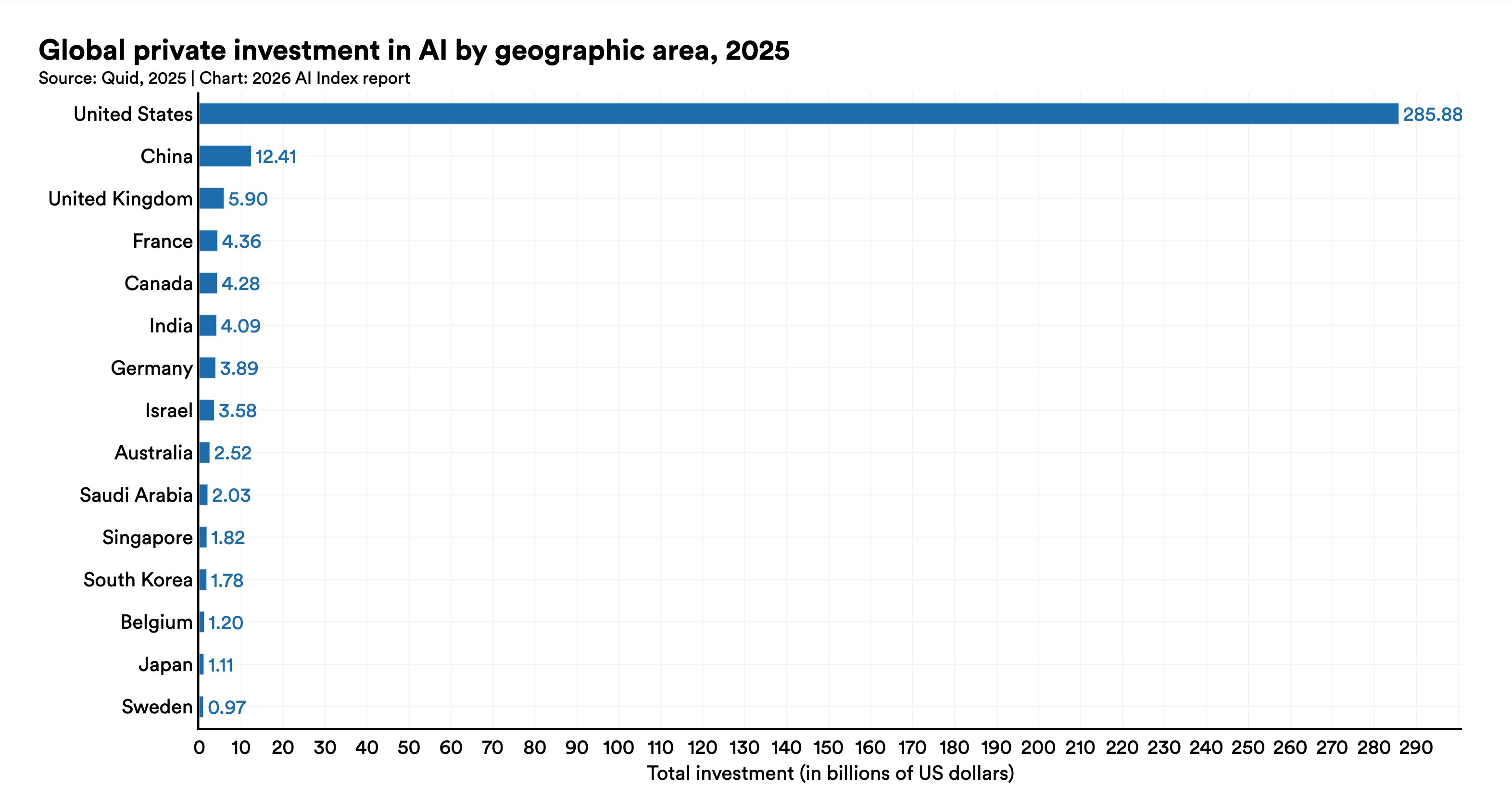

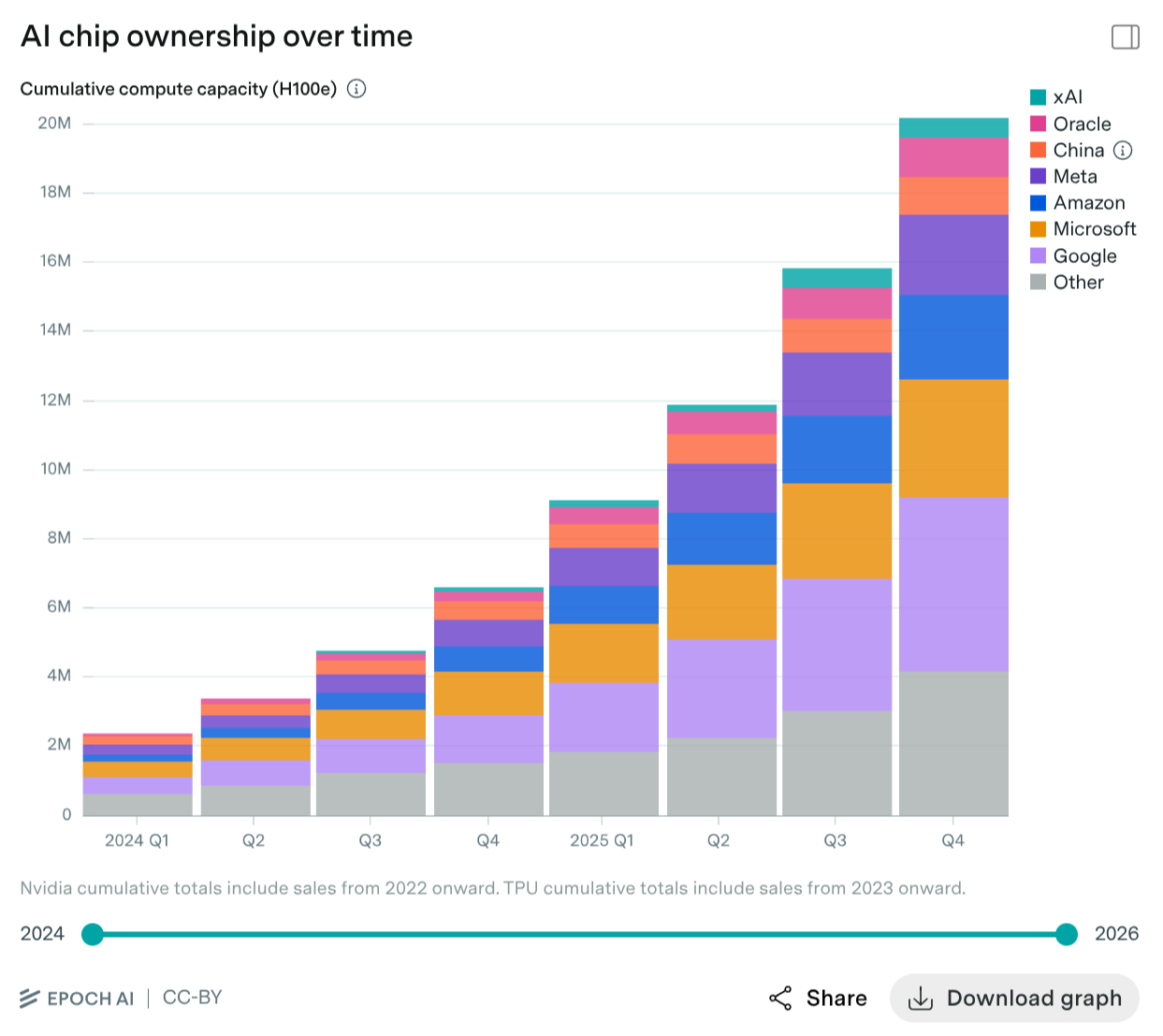

You wanna beat China? The US has 350 million people. China has 1.4 billion. And unlike India with a 76 average IQ, China has a 107 average IQ. They are smarter than the average American, they are hard working, and they have a better education system. So what do you think Americans have? Guts? Freedom? Guns? That old American Spirit? All bullshit.

America has won for one simple reason, anyone can come be a part of it, and the best people in the world want to. Jensen Huang is from Taiwan, he is an American. Elon Musk is from South Africa, he is also an American. They have created trillions in value. This is why America works. People come to America to create value.

I live in Hong Kong. While it’s more multicultural than the mainland, I will never be Chinese. I have no interest in creating a large scale business in China since I can’t own it. As of 2026 as a random White guy, you can’t just join the Chinese project, it’s not for you.

If America wants to continue winning, it needs to welcome all the best people with open arms. Sure, we don’t want people in America to mooch, but as long as we did step 2, this isn’t a problem. Every single positive sum person should be welcome.

Want to beat China? Figure out how to get the most talented Chinese people to move to America. Recruiting the talent from the other team is winning on both sides. Imagine a 20 million person Chinese city in Arizona. It’s not like we don’t have the space, USA and China have very similar land areas.

As long as this doesn’t happen, America will just lose. This was America’s key historic advantage.

5 – Crack down on negative sum behavior.

This is the most nebulous of the five, but perhaps the most important. This is what properly done regulation is. You don’t want no regulation, you want good regulation.

There’s obvious negative sum behavior like violent and property crime. What kind of weak government lets this happen on their turf? Your average drug cartel wouldn’t tolerate it. A government is a monopoly on violence, and every time unsanctioned violence happens on your territory that’s an affront to your sovereignty.

Then there’s things like advertising and private equity. We live in a society. Add up the sum of what someone is doing across all of society. If you are taking $1,000 from someone to create $900 of value for yourself, this is bad. Society is losing $100.

This is the least complete of the five, and will require we get some smart people in government to figure out all the nuance. But once we have sound money, no redistribution, meritocracy, and the smartest people in the world, I think we can figure it out.

When we don’t do this, and the losing and demoralization continues, don’t say I didn’t tell you so. 5 simple steps. We could turn it around so fast. We could have another American century. But realistically, these things won’t happen and we’ll have a century of humiliation.

Also not a single word of this was written by AI. I pasted it in for a quick vibe check, ChatGPT was offended by my blasphemy to wokism, then secondly offended by my blasphemy to Christianity. I followed up for like 5 minutes and it apologized and admitted I was right. Good sycophantic clanker reason from first principles and not experts next time.

¹I actually did a lot of research into this one. It was invented in the 2nd century by Celsus, a Greek philosopher. I chose it to be inflammatory partially cause I want to soften the blow to the wokists’ shibboleths, but I also think it’s the most likely set of events. We are all scientific materialists in reality, and virgin births don’t happen.

This leaves three possibilities for the father, Joseph, a consensual affair, or a non-consensual one. If it was Joseph, why the story? A premarital pregnancy was scandalous, but if he was the father, why would he want to divorce her to save her from disgrace? (Matthew 1:19) The bible is also clear that he didn’t sleep with her until after Jesus’s birth. (Matthew 1:25)

So that leaves consensual affair or assault. A consensual affair resulting in pregnancy would be too much to forgive. They hadn’t slept together yet, divorce her and be done with it – she can go back to that guy. But an assault? It’s still a lot to forgive, though maybe not for the man who raised Jesus. Don’t punish Mary for what happened, turn the other cheek. He decides on the virgin birth story (Matthew 1:20), he saves face, and goes on to have several biological children with her (Mark 6:3).

]]>